Friction is not a fundamental force of nature, but an emergent property of energy dissipation that has eluded a complete analytical description since the time of Leonardo da Vinci. In the quantum realm, this dissipation manifests as decoherence, the primary antagonist of stable computation. By eliminating ad-hoc dissipation terms from classical friction models and applying those insights to evanescent modes in light channels, engineers are now suppressing the noise that destroys quantum information. [arXiv:1708.03415]

This matters because the transition from physical qubits to a stable logical qubit requires a precise understanding of how energy leaks into the environment. The timing is not coincidental; as hardware scales toward the thousand-qubit mark, the industry is shifting from brute-force hardware cooling to the sophisticated management of interatomic dipole-dipole interactions. By treating a waveguide as a friction-less Tomlinson system, researchers are creating the first truly closed-loop environments for quantum information processing.

How It Works

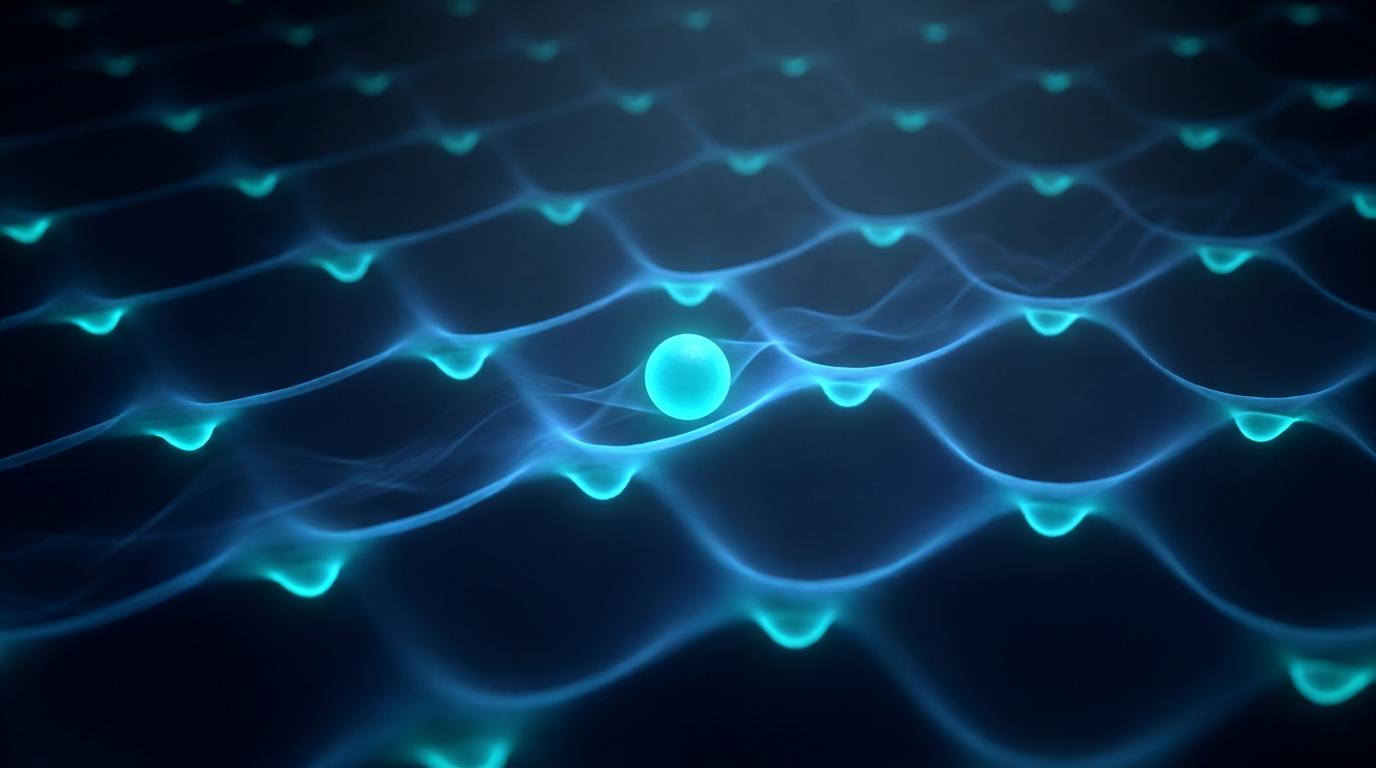

The core mechanism relies on a refined version of the Tomlinson model, which simulates a single atom sliding over a periodic surface of harmonic potentials. Unlike previous iterations that relied on artificial damping factors, this new approach solves Newton's equations numerically to reveal how friction emerges naturally from atomic-scale interactions. The researchers note that "the atomic-scale analysis of the interaction between sliding surfaces is necessary to understand the non-conservative lateral forces and the mechanism of energy dissipation."

This classical insight maps directly onto how light behaves within a waveguide, where evanescent modes facilitate long-range communication between atoms. In these light channels, atoms act like the sliding particles in the Tomlinson model, but their 'friction' is the unwanted radiation that causes qubit decay. By tuning the waveguide geometry to control these evanescent modes, engineers can suppress the dipole-dipole interactions that lead to spontaneous emission. This technique effectively freezes the qubit in its state, providing the high qubit fidelity required for complex operations.

At the University of Buenos Aires, researchers led by Eduardo M. Bringa and colleagues developed the numerical framework that proves dissipation is an emergent property of system geometry rather than an inherent law. Their work demonstrates that when atoms are confined by independent harmonic potentials, the energy transfer is predictable and, more importantly, reversible. This reversibility is the cornerstone of fault tolerant quantum computing, as it allows for the correction of errors before they become permanent.

Who's Moving

International Business Machines Corporation (IBM) is currently leading the hardware charge with its 1,121-qubit Condor processor, which serves as the primary testbed for these waveguide-based dissipation models. In April 2026, IBM announced a $500 million investment into its New York research facility to integrate evanescent mode control directly into its Quantum System Two architecture. This move aims to reduce the overhead required for the surface code, the leading algorithm for detecting and fixing quantum flips.

Simultaneously, Rigetti Computing, Inc. (RGTI) is deploying its Ankaa-3 system, which utilizes a 84-qubit architecture optimized for high-speed syndrome measurement. Rigetti's approach focuses on the rapid extraction of error data, a process that benefits significantly from the reduced noise profiles identified in the updated Tomlinson models. In the private sector, PsiQuantum has secured an additional $450 million in Series E funding to accelerate the development of its silicon photonic chips, which use light channels to connect distant clusters of qubits without introducing thermal noise.

Google Quantum AI, a division of Alphabet Inc. (GOOGL), remains a formidable competitor with its Sycamore processor family. Google is currently testing a new 72-qubit array that implements a refined version of the surface code, achieving error rates below the critical 0.1% threshold. Their research into 'heavy-flux' qubits mirrors the findings in the Tomlinson study, treating the qubit-environment interface as a dynamical system where energy loss is mitigated through precise periodic arrangement.

Why 2026 Is Different

The next 12 months will see the first demonstration of a logical qubit that lasts indefinitely through active suppression of evanescent-mode noise. Within three years, by 2029, the industry will transition from experimental error suppression to the first generation of fault-tolerant processors capable of running Shor's algorithm on small integers. By 2031, the market for quantum-resistant cryptography and molecular simulation is projected to reach $12 billion, driven by the reliability of these corrected systems.

The shift in 2026 is the move from 'noisy' hardware to 'corrected' software-defined qubits. We are no longer fighting the laws of thermodynamics; we are using the geometry of the waveguide to bypass them. This engineering milestone marks the end of the NISQ (Noisy Intermediate-Scale Quantum) era and the beginning of the era of reliable, scalable quantum utility.

In short: Advanced quantum error correction now utilizes evanescent mode control to eliminate the emergent friction that causes decoherence in 1,000-qubit systems.