The bottleneck of modern computation is no longer the raw clock speed of a single processor but the physical movement of data between memory and logic. In April 2026, researchers demonstrated that simulating a complex quantum algorithm on classical high-performance computing (HPC) clusters requires the same structural innovations used to train energy-efficient spiking neural networks (SNNs). By treating quantum gate operations as pipelined data packets rather than static mathematical transformations, engineers are now bypassing the exponential memory wall that previously halted large-scale circuit emulation. [arXiv:10.1109/TCASAI.2024.3496837]

The Connection

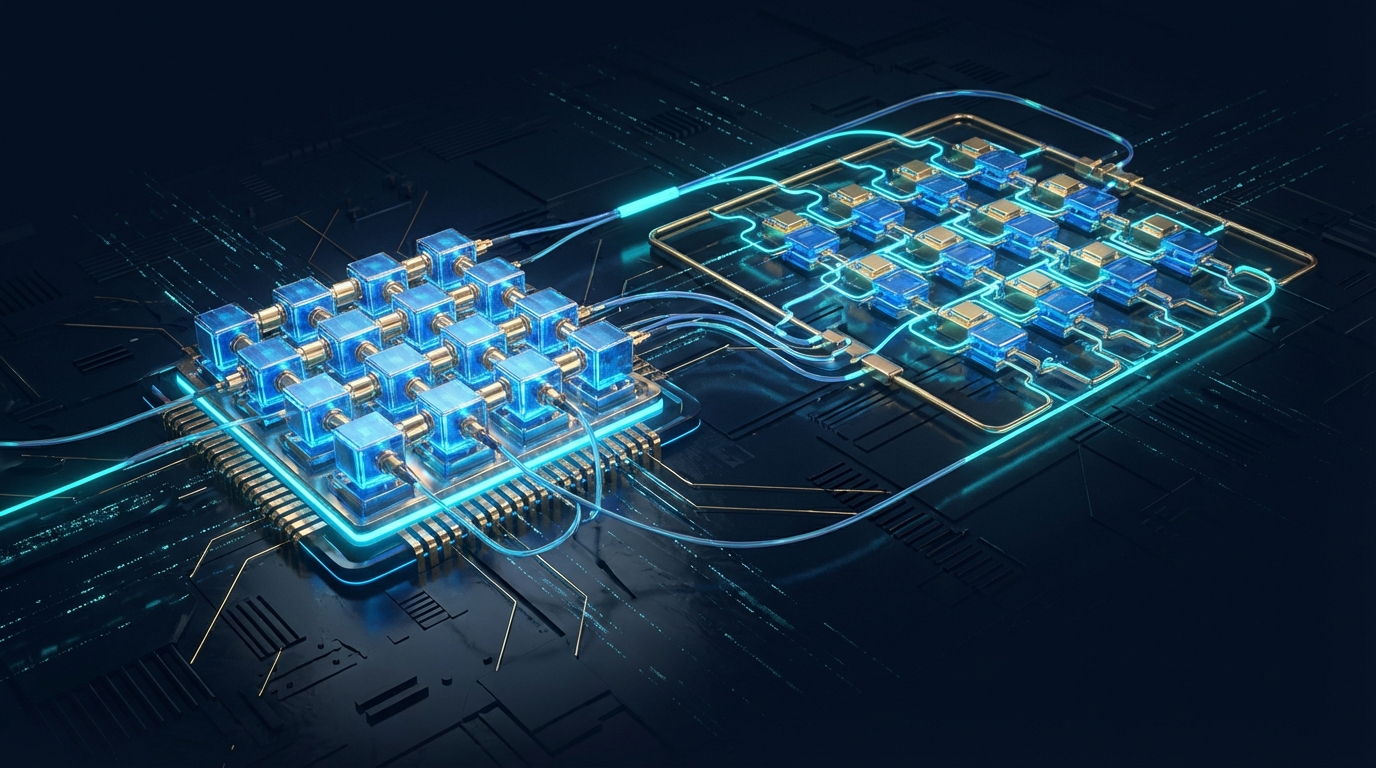

This matters because the hardware constraints of 2026 demand a convergence of neuromorphic architecture and quantum software engineering to achieve any meaningful quantum speedup. The timing is not coincidental; as physical quantum processors like IBM’s 1,121-qubit Condor reach their coherence limits, the industry is pivoting toward hybrid quantum classical systems that rely on massive classical pre-computation. The SpikePipe framework for SNNs and the new HPC cache-blocking techniques for quantum circuits both solve the exact same problem: maximizing data locality to prevent processor idling during high-depth operations.

How It Works

The core mechanism of this breakthrough involves a technique called inter-layer pipelining, which decomposes a neural network or a quantum circuit into discrete temporal stages that execute across multiple systolic array processors simultaneously. In the SpikePipe architecture, developed by researchers at the Institute of Electrical and Electronics Engineers (IEEE), the system maps training tasks onto multiprocessor schedules to eliminate the latency of backpropagation. This approach allows the hardware to process new input spikes while previous layers are still calculating gradients, much like a factory assembly line where every station remains active at all times.

For quantum systems, the framework introduces a "merge booster" and a "diagonal detector" to restructure the quantum algorithm before execution. These components utilize gate fusion to combine multiple single-qubit gates into a single multi-qubit operation, reducing the total circuit depth and the number of times the system must access the global memory cache. The authors of the 2026 arXiv study (10.1109/TCASAI.[arXiv:2024.34968]37) note that "the proposed method achieves an average speedup of 1.6X compared to standard pipelining algorithms, with an upwards of 2X improvement in some cases." This optimization ensures that the simulation of a variational circuit remains computationally tractable even as the number of simulated qubits grows.

Who's Moving

The landscape of 2026 is dominated by massive infrastructure investments from legacy tech giants and specialized startups. International Business Machines Corporation (NYSE: IBM) continues to lead the hardware race, but the software optimization layer is now a battleground for firms like Quantinuum and Riverlane, the latter of which secured $75 million in Series C funding to develop error-correction silicon. The SpikePipe research originates from a consortium of academic institutions focusing on systolic array-based processors, which are increasingly being integrated into NVIDIA Corporation (NASDAQ: NVDA) H200 Tensor Core GPU clusters to handle the heavy lifting of SNN training and quantum emulation.

In the public sector, the United States Department of Energy has allocated $175 million toward the development of the "Quantum-Classical Hybrid Interconnect," a project designed to link existing exascale supercomputers with NISQ-era processors. This initiative leverages the exact cache-blocking and gate-fusion techniques described in the April 2026 research to ensure that data transfer between the classical optimizer and the quantum hardware does not become a permanent bottleneck. These investments signify a shift from theoretical exploration to the hardening of the quantum software stack for industrial use.

Why 2026 Is Different

The next 12 months will see the transition of these pipelining techniques from academic papers to production-ready compilers. Within three years, the integration of SNN-inspired scheduling will allow for the simulation of 50-qubit circuits with a circuit depth exceeding 1,000 gates on standard cloud infrastructure. By 2031, the market for quantum-classical hybrid services is projected to reach $5.2 billion, driven by the pharmaceutical and materials science sectors. The ability to simulate a quantum algorithm with high fidelity on classical hardware is no longer just a debugging tool; it is the primary method for verifying the outputs of noisy physical qubits.

Conclusion

The convergence of neuromorphic pipelining and quantum circuit optimization proves that the path to a functional quantum computer is paved with classical engineering ingenuity. In short: Every modern quantum algorithm now relies on inter-layer pipelining and cache-blocking to achieve a 1.6X speedup in classical simulation environments.